I thought watermark was a trivial concept, until I encounter cross-stream joins and out-of-order data. Handling unexpected event-time skew and late data arrival across multiple streams requires more than just a basic configuration that documentation often overlooks. This post is a technical deep dive into the lessons learned while debugging state expiration and late-arrival logic when developing and deploying complex streaming pipelines at my work.

👋 I'm Lam, a data engineer.

I write about data engineering, web development, and other technology stuff...

Spark Structured Streaming ordered write

Distributed message systems like Kafka are built for throughput and fault tolerance, not ordering. A Kafka topic splits data across multiple partitions — each partition maintains its own internal order, but there is no ordering guarantee across partitions. When Spark reads from multiple partitions in parallel, records arrive in the executor in arrival order, not event order. A transaction timestamped 10:00:03 sitting in a lagging partition will arrive after a transaction timestamped 10:00:47 from a faster one. From Spark's perspective, the later event came first.

This breaks any application where output correctness depends on sequence:

- Transaction listings — rows must be displayed in the order they occurred. Out-of-order writes mean a user sees their payment history shuffled.

- Running balance calculations — each row's balance is derived from all prior rows. A single late-arriving event invalidates every balance computed after it.

This post covers how to tackle this in Spark Structured Streaming using watermarking, stateful operations, and controlled write semantics.

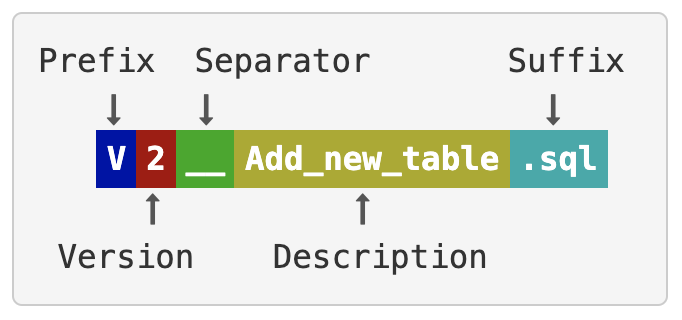

db-schemachange

db-schemachange is a simple, lightweight python based tool to manage database objects for Databricks, Snowflake, MySQL, Postgres, SQL Server, and Oracle. It

follows an Imperative-style approach to Database Change Management (DCM) and was inspired by

the Flyway database migration tool. When combined with a version control system and a CI/CD

tool, database changes can be approved and deployed through a pipeline using modern software delivery practices. As such

schemachange plays a critical role in enabling Database (or Data) DevOps.

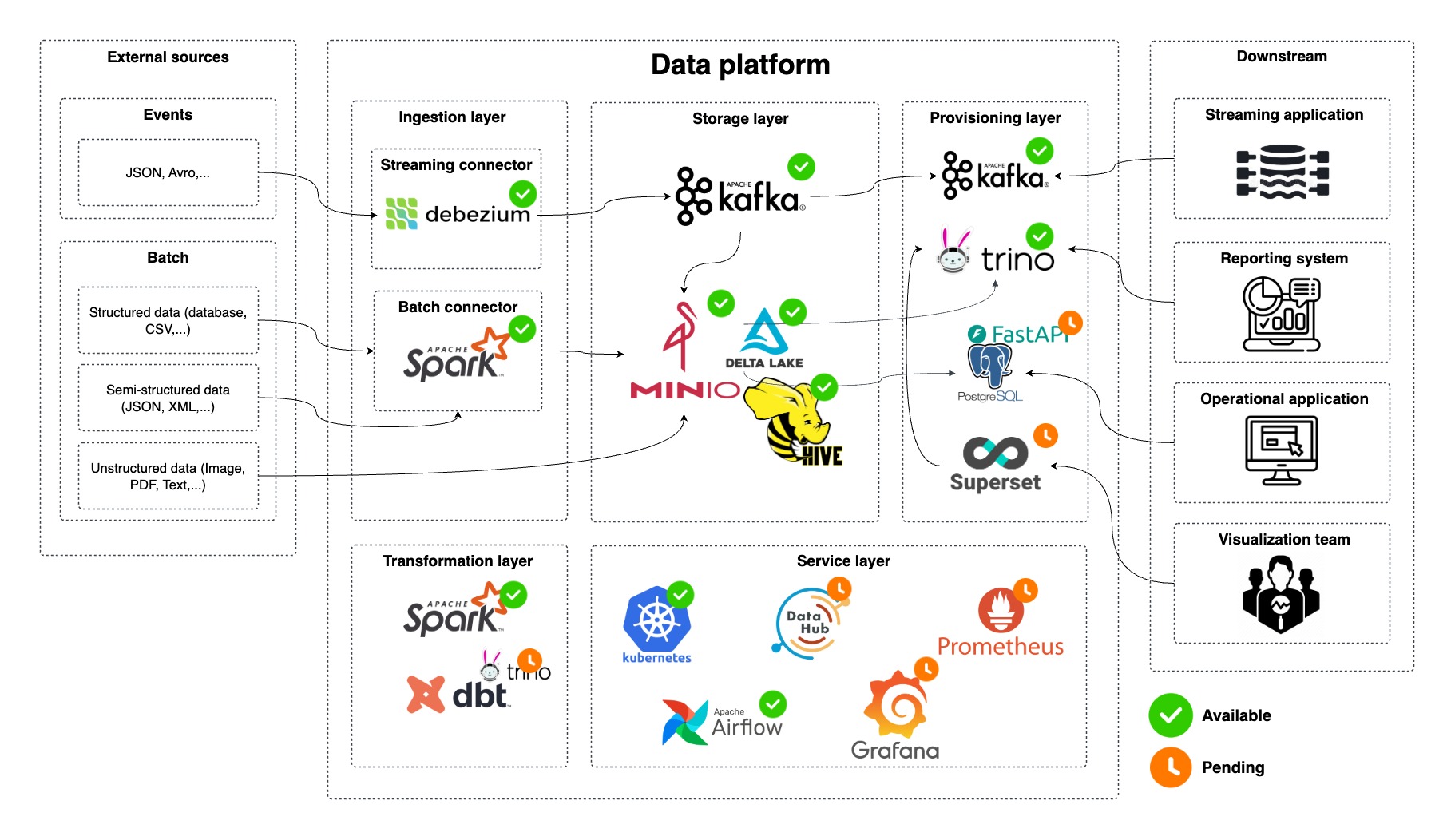

Cloud Native Data Platform

This platform leverages cloud-native technologies to build a flexible and efficient data pipeline. It supports various data ingestion, processing, and storage needs, enabling real-time and batch data analytics. The architecture is designed to handle structured, semi-structured, and unstructured data from diverse external sources.

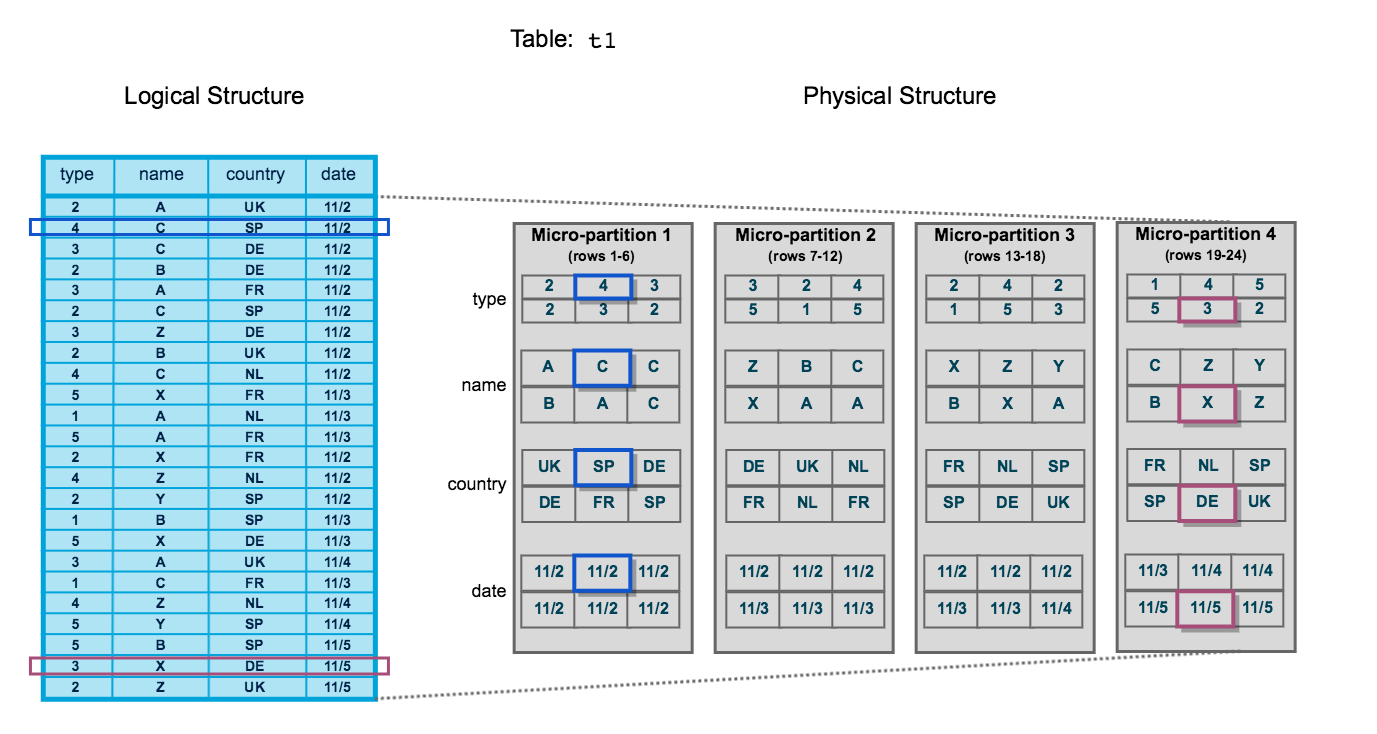

Understanding Snowflake micro-partitions

Snowflake is one of the most popular data warehouse solutions nowaday because of the various features that it can provide you to build a complete data platform. Understanding the Snowflake storage layer not only helps us to have a deep dive into how it organizes the data under the hood but it is also crucial for performance optimization of your queries.