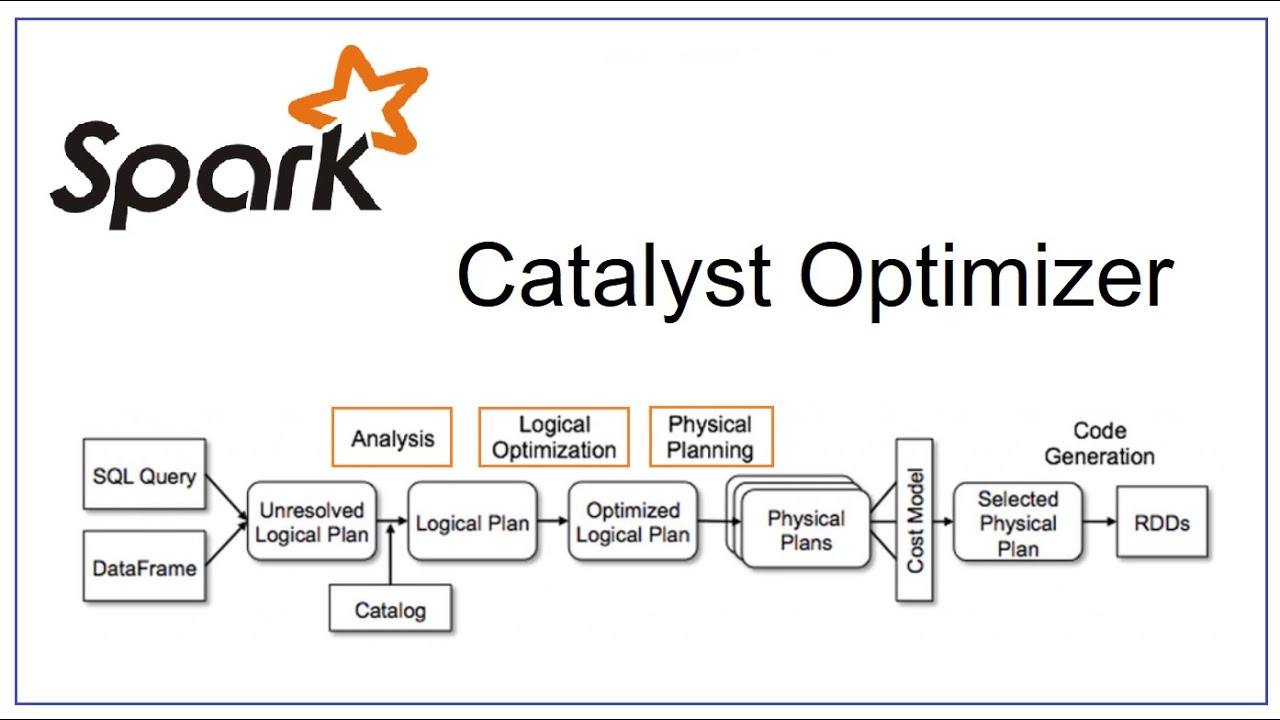

Spark catalyst optimizer is located at the core of Spark SQL with the purpose of optimizing structured queries expressed in SQL or through DataFrame/Dataset APIs, minimizing application running time and costs. When using Spark, often people see the catalyst optimizer as a black box, when we assume that it works mysteriously without really caring what happens inside it. In this article, I will go in depth of its logic, its components, and how the Spark session extension participates to change the Catalyst's plans.

MySQL series - Indexing

Indexing is a method to make queries faster, which is a very important part of improving performance. For large data tables, precise indexing will increase the query speed as a whole, however, this is often not taken into account in the table design process. This article talks about the types of indexes and how to properly index them.

MySQL series - Multiversion concurrency control

Usually storage engines do not use a simple row lock mechanism, to achieve good performance in a highly concurrent read and write environment, storage engines implement row locking with a certain complexity, the method is often used, is multiversion concurrency control (MVCC).

MySQL series - Transaction In MySQL

The next article in the MySQL series is about transactions. A very common operation in MySQL in particular and relational databases in general. Let's go to the article.

MySQL series - MySQL Architecture Overview

Hello everyone, recently, I did some research in MySQL because I think whoever doing data engineering should go in-depth with a certain relational database. Once you get a deep understanding of one RDBMS, you can easily learn the other RDBMS since they have many similarities. For the next few blogs, I will have a series about MySQL, and this is the first article.

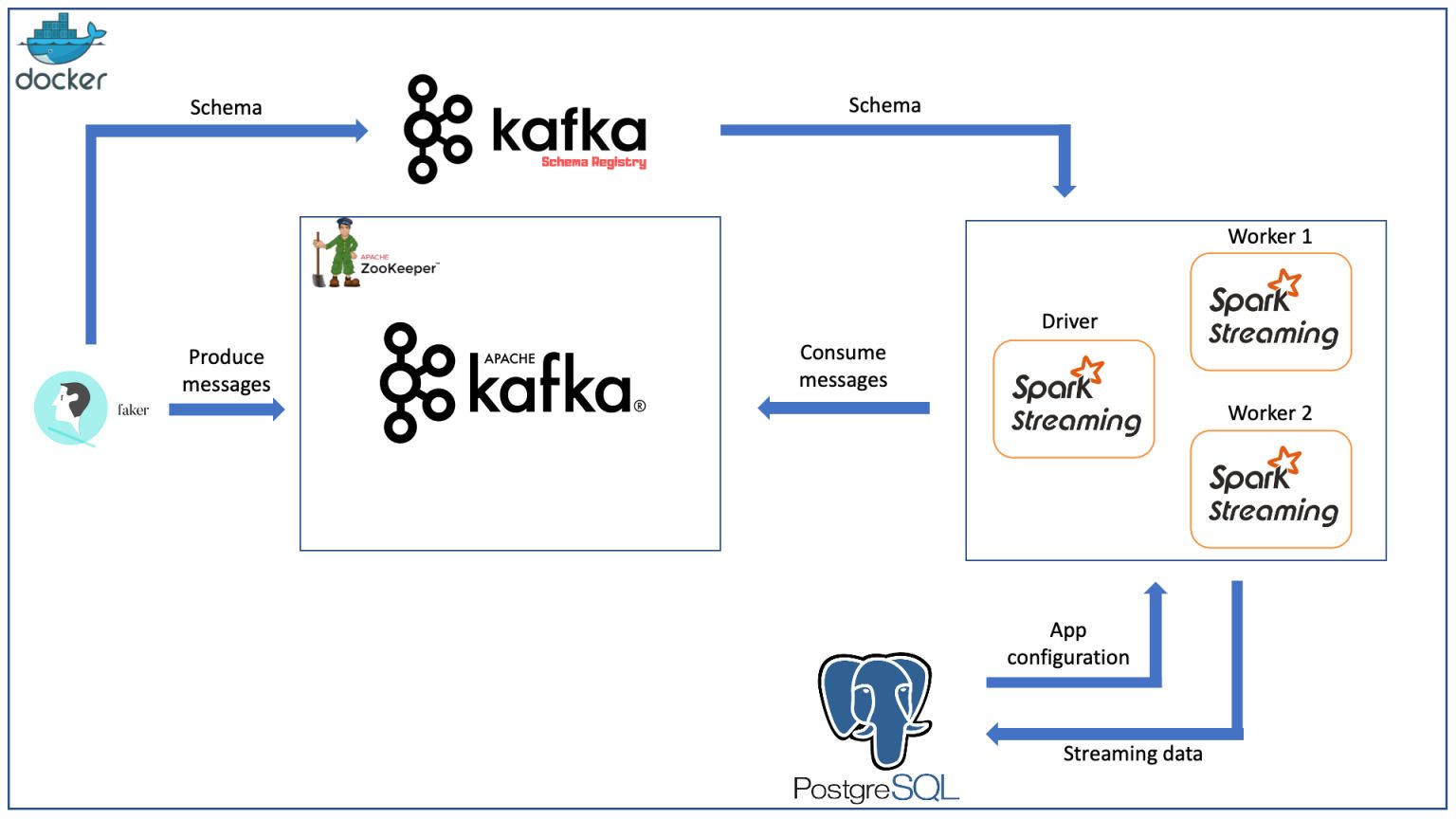

Create A Data Streaming Pipeline With Spark Streaming, Kafka And Docker

Hi guys, I'm back after a long time without writing anything. Today, I want to share about how to create a Spark Streaming pipeline that consumes data from Kafka, everything is built on Docker.

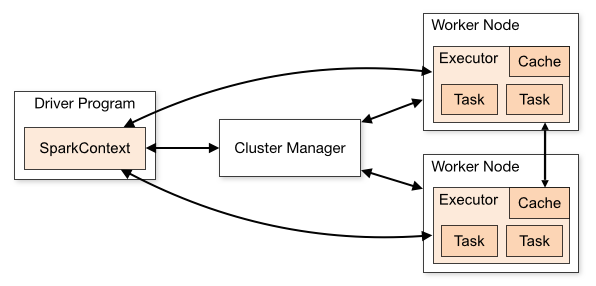

Create A Standalone Spark Cluster With Docker

Lately, I've spent a lot of time teaching myself how to build Hadoop clusters, Spark, Hive integration, and more. This article will write about how you can build a Spark cluster for data processing using Docker, including 1 master node and 2 worker nodes, the cluster type is standalone cluster (maybe the upcoming articles I will do about Hadoop cluster and integrated resource manager is Yarn). Let's go to the article.

Một số câu hỏi phỏng vấn AI/ML

Gần đây, AI/ML là một cái trend, người người AI, nhà nhà AI. Sinh viên đổ xô đi học AI hết, các trường đại học cũng dần mở các môn về học máy, trí tuệ nhân tạo, rồi thị giác máy tính để "bắt kịp".

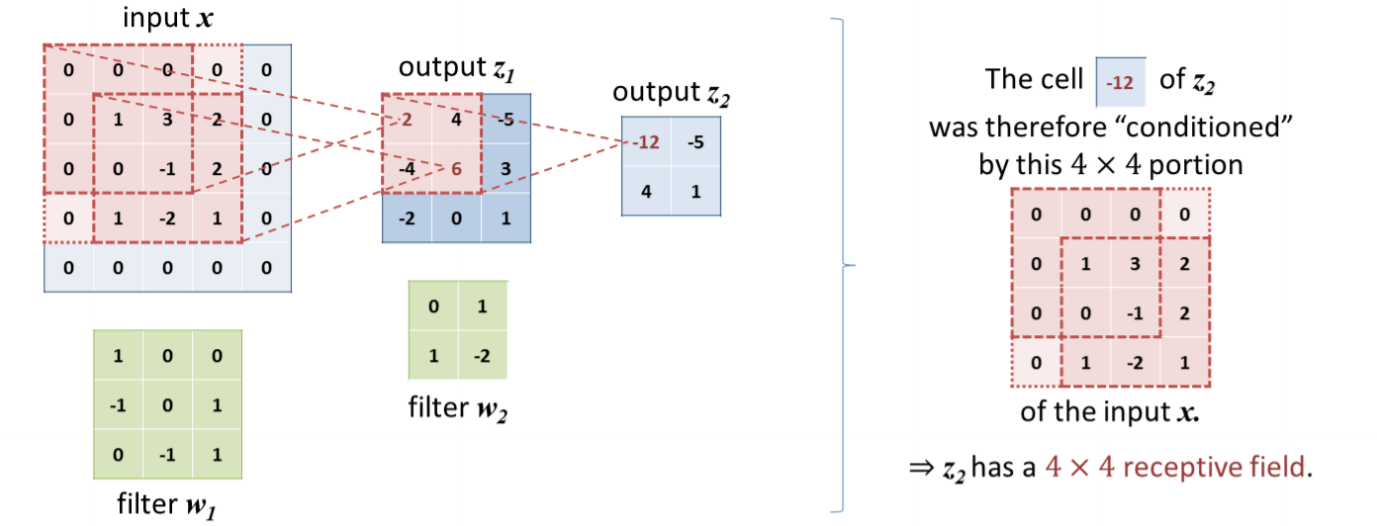

Receptive field trong thị giác máy tính

Trong bài viết này, mình muốn nói về receptive field, một khái niệm rất quan trọng trong các bài toán thị giác máy tính mà bạn nào học cũng cần phải biết để giải thích tại sao người ta lại muốn xây mạng sâu hơn. Cùng đi vào bài viết thôi.

Đại số tuyến tính cơ bản - Phần 1

Tiếp đây sẽ là loạt bài viết về đại số tuyến tính mình đã học lại khi đọc quyển Mathematics for Machine Learning trong thời gian học về Machine Learning và AI. Đây là phần thứ nhất trong loạt bài này.